Where to buy 🚀 aged domains and backlinks 🔥 from Best-SEO-Domains | 0083-0608

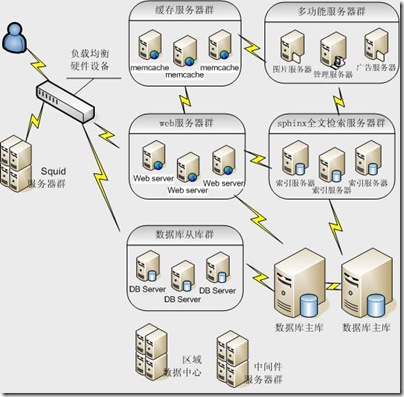

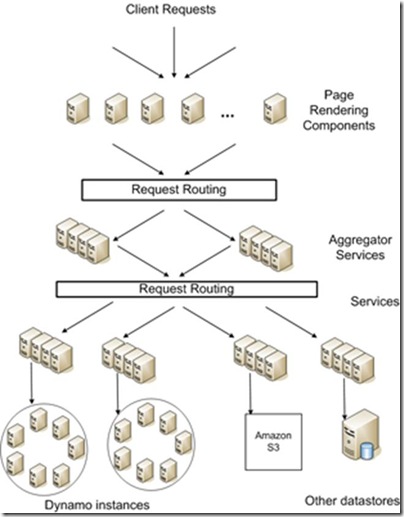

1.系统概况图

图1.1 系统架构概况图

图1.2 较为完整的系统架构图

2.系统使用的主要技术

下列排名不分先后

2.1前端

JavaScript,html,css,silverlight,flash

Javascript类库,用来简化html的操作,事件处理,动画,异步访问,用于web的快速开发。最新版本是1.7.1,分为开发环境(大小为229k)和生产环境(大小为31k)。特点是轻量,体积小;css兼容1-3;跨浏览器。凡客,当当,亚马逊。

如果从框架角度分级的话,可以有以下分类:

- 零级,完成base工作,包括扩展原有对象的方法,Ajax通讯部分,比较精简

- 一级,完成effect工作,包括增加常用效果转换函数,如tween、drag、maskLayer、fade等的特效

- 二级,完成component工作,包括对话框、列表、树、日历等的组件

- 三级,完成application工作,包括完整的前端平台,允许用户定义能实现一定功能的模块

一些UI控件和开发框架只做零级Prototype.js,和一级jQuery/Mootools;一些做到了三级,如Dojo和EXT。

小巧灵活,简洁实用,使用起来让人感觉愉悦。淘宝,腾讯。

2.2后端

Php,Perl,asp,ruby,python,.net,java,jsp(java server page)

静态语言:java, .net

动态语言(脚本语言):php, asp, jsp, perl, python, ruby

Php是老牌的脚本语言,尽管出现了很多的新语言,但是php还是大多数网站的首选,据说全世界70%的网站都使用php。LAMP(linux+apache+mysql+php)是经典组合。

ASP是Active Server Page的缩写,意为“动态服务器页面”。ASP是微软公司开发的代替CGI脚本程序的一种应用,它可以与数据库和其它程序进行交互,是一种简单、方便的编程工具。ASP的网页文件的格式是。常用于各种动态网站中。

JSP(Java Server Pages)是由Sun Microsystems公司倡导、许多公司参与一起建立的一种动态网页技术标准。JSP技术有点类似ASP技术,它是在传统的网页HTML文件(*.htm,*.html)中插入Java程序段(Scriptlet)和JSP标记(tag),从而形成JSP文件(*.jsp)。 用JSP开发的Web应用是跨平台的,既能在Linux下运行,也能在其他操作系统上运行。

Python和ruby是近几年崛起的开源语言,特点是容易上手,能快速完成原型。同时也是较为成熟脚本语言。Python是豆瓣的主要语言,google,youtube等网站也都在使用。

http://www.python.org/about/quotes/

Twitter的前端主要使用ruby,motorola和NASA也都使用了ruby。

http://www.ruby-lang.org/en/documentation/success-stories

2.3缓存

开源。

Squid服务器群,把它作为web服务器端的前置cache服务器,缓存相关请求来提高web服务器速度。Squid将大部分静态资源(图片,js,css等)缓存起来,也可以缓存频繁访问的网页,直接返回给访问者,减少应用服务器的负载。

开源。

Wikipedia,Flickr,Twitter,Youtube

memcached服务器群,一款分布式缓存产品,很多大型网站在应用; 它可以应对任意多个连接,使用非阻塞的网络IO。由于它的工作机制是在内存中开辟一块空间,然后建立一个HashTable,Memcached自管理这些HashTable。因为通常网站应用程序中最耗费时间的任务是数据在数据库的检索,而多个用户查询相同的SQL时,数据库压力会增大,而通过memcached的查询缓存命中,数据直接从memcached内存中取,每次缓存命中将替换到数据库服务器的一次往返,到达数据库服务器的请求更少,间接地提高了数据库服务器的性能,从而使应用程序运行得更快。它通过基于内存缓存对象来减少数据库查询的方式改善网站系统的反应,其最吸引人的一个特性就是支持分布式部署。

2.4中间件

Java,.net,c,c++

2.5存储

2.5.1关系数据库

Oracle,mysql,mssql,postgreSQL

关系数据库,拥有15年的历史。免费,开源。可以运行在linux、unix和windows上,支持事物、主外键、连接、视图、触发器、存储过程。包含大量的数据类型,也支持大对象。支持多种语言,c,c++,java,c#,perl,python,ruby等等。

2.5.2 NoSQL存储

MongoDB,Redis,CouchDB,Cassandra,HBase

NoSQL(not only sql),不仅仅是SQL。用来弥补关系数据库在某些方面的不足。例如:

l 高并发读写。每秒上万次的读写,关系数据库有点吃力。

l 海量数据的高效存储和访问。例如:对一张表有2亿数据的表进行读写,效率较为低下。

l 高扩展性。对于数据库的升级和扩展,增加节点,往往需要停机和数据迁移。

有一些地方不需要关系数据库,例如:

l 事务一致性。某些场合不需要事务,对于数据的一致性也没有严格要求。

l 读写实时性。有些场合不需要实时的读写。

http://baike.baidu.com/view/2677528.htm

文档型nosql,支持主从复制。有很多的大公司使用。支持多种编程语言。

http://www.mongodb.org/display/DOCS/Production+Deployments

Disney,SAP,淘宝(监控数据),sourceforge,大众点评(用户行为分析,用户、组)。

键值型nosql,vmware赞助,支持多种编程语言。Twitter,淘宝,新浪微博都有使用。

都是apache旗下的项目。

2.5.3文件系统

商用中间件,自定义文件系统

2.6操作系统

Windows,linux,unix

2.7 Web应用服务器软件

IIS,apache,tomcat,jboss,weblogic(BEA,商用,收费),websphere(IBM,商用,收费),lighttpd,nginx

IIS

微软windows操作系统专用。

lighttpd,是一个德国人领导的开源软件,其根本的目的是提供一个专门针对高性能网站,安全、快速、兼容性好。lighttpd并且灵活的web server环境。具有非常低的内存开销,cpu占用率低,效能好,以及丰富的模块等特点。lighttpd是众多OpenSource轻量级的web server中较为优秀的一个。支持FastCGI, CGI, Auth, 输出压缩(output compress), URL重写, Alias等重要功能,

开源

Nginx+php(FastCGI)+Memcached+Mysql+APC 是目前主流的高性能服务器搭建方式!适合大中型网站,小型站长也可以采用这种组合!

Nginx 超越 Apache 的高性能和稳定性,使得国内使用 Nginx 作为 Web 服务器的网站也越来越多,其中包括国内最大的电子地图MapBar、新浪博客、新浪播客、网易新闻等门户网站频道,六间房、56.com等视频分享网 站,Discuz!官方论坛、水木社区等知名论坛,豆瓣、YUPOO相册、海内SNS、迅雷在线等新兴Web 2.0网站,更多的网站都在使用Nginx配置。

2.8 框架

Javascript:Jquery,prototype.js,Kissky,extjs。

.NET:企业库,unity,NHibernate,Sprint.NET,ibatis,MVC,MEF,Prism,log4net,23个开源项目,lucene.NET

Java:hibernate,spring,struts,easyjf,log4j,开源项目,lucene

Python:django,flask,bottle,tornado,uliweb,web.py

Ruby:rails

PHP:PEAR

3.主流网站架构演进

3.1第一步:物理分离webserver和数据库

刚开始我们的网站可能搭建在一台服务器上,这个时候由于网站具备了一定的特色,吸引了部分人访问,逐渐你发现系统的压力越来越高,响应速度越来越慢,而这个时候比较明显的是数据库和应用互相影响,应用出问题了,数据库也很容易出现问题,而数据库出问题的时候,应用也容易出问题,于是进入了第一步演变阶段:将应用和数据库从物理上分离,变成了两台机器,这个时候技术上没有什么新的要求,但你发现确实起到效果了,系统又恢复到以前的响应速度了,并且支撑住了更高的流量,并且不会因为数据库和应用形成互相的影响。

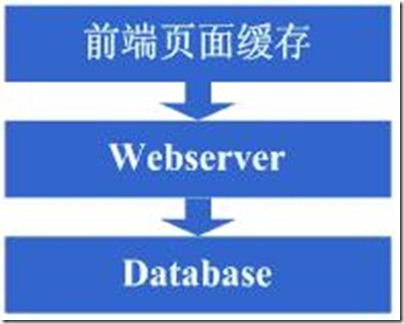

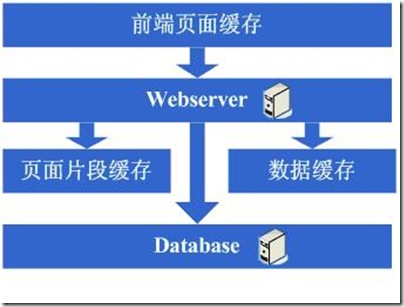

图3.1

3.2第二步:增加页面缓存

好景不长,随着访问的人越来越多,你发现响应速度又开始变慢了,查找原因,发现是访问数据库的操作太多,导致数据连接竞争激烈,所以响应变慢,但数据库连接又不能开太多,否则数据库机器压力会很高,因此考虑采用缓存机制来减少数据库连接资源的竞争和对数据库读的压力,这个时候首先也许会选择采用squid等类似的机制来将系统中相对静态的页面(例如一两天才会有更新的页面)进行缓存(当然,也可以采用将页面静态化的方案),这样程序上可以不做修改,就能够很好的减少对webserver的压力以及减少数据库连接资源的竞争,OK,于是开始采用squid来做相对静态的页面的缓存。

图3.2

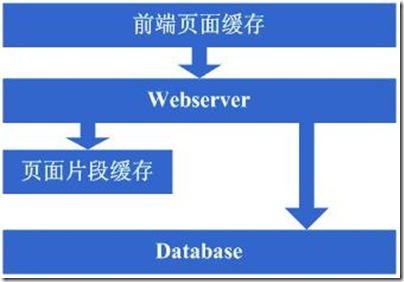

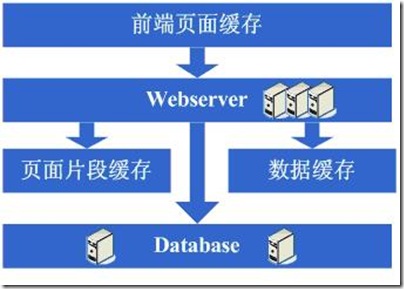

3.3第三步:增加页面片段缓存

增加了squid做缓存后,整体系统的速度确实是提升了,webserver的压力也开始下降了,但随着访问量的增加,发现系统又开始变的有些慢了,在尝到了squid之类的动态缓存带来的好处后,开始想能不能让现在那些动态页面里相对静态的部分也缓存起来呢,因此考虑采用类似ESI之类的页面片段缓存策略,OK,于是开始采用ESI来做动态页面中相对静态的片段部分的缓存。

图3.3

3.4第四步:数据缓存

在采用ESI之类的技术再次提高了系统的缓存效果后,系统的压力确实进一步降低了,但同样,随着访问量的增加,系统还是开始变慢,经过查找,可能会发现系统中存在一些重复获取数据信息的地方,像获取用户信息等,这个时候开始考虑是不是可以将这些数据信息也缓存起来呢,于是将这些数据缓存到本地内存,改变完毕后,完全符合预期,系统的响应速度又恢复了,数据库的压力也再度降低了不少。可以使用的技术有:memcached。

图3.4

3.5第五步:增加webserver

好景不长,发现随着系统访问量的再度增加,webserver机器的压力在高峰期会上升到比较高,这个时候开始考虑增加一台webserver,这也是为了同时解决可用性的问题,避免单台的webserver down机的话就没法使用了,在做了这些考虑后,决定增加一台webserver,增加一台webserver时,会碰到一些问题,典型的有:

1、如何让访问分配到这两台机器上,这个时候通常会考虑的方案是Apache自带的负载均衡方案,或LVS这类的软件负载均衡方案;

2、如何保持状态信息的同步,例如用户session等,这个时候会考虑的方案有写入数据库、写入存储、cookie或同步session信息等机制等;

3、如何保持数据缓存信息的同步,例如之前缓存的用户数据等,这个时候通常会考虑的机制有缓存同步或分布式缓存;

4、如何让上传文件这些类似的功能继续正常,这个时候通常会考虑的机制是使用共享文件系统或存储等;

在解决了这些问题后,终于是把webserver增加为了两台,系统终于是又恢复到了以往的速度。

图3.5

3.6第六步:分库

享受了一段时间的系统访问量高速增长的幸福后,发现系统又开始变慢了,这次又是什么状况呢,经过查找,发现数据库写入、更新的这些操作的部分数据库连接的资源竞争非常激烈,导致了系统变慢,这下怎么办呢,此时可选的方案有数据库集群和分库策略,集群方面像有些数据库支持的并不是很好,因此分库会成为比较普遍的策略,分库也就意味着要对原有程序进行修改,一通修改实现分库后,不错,目标达到了,系统恢复甚至速度比以前还快了。

图3.6

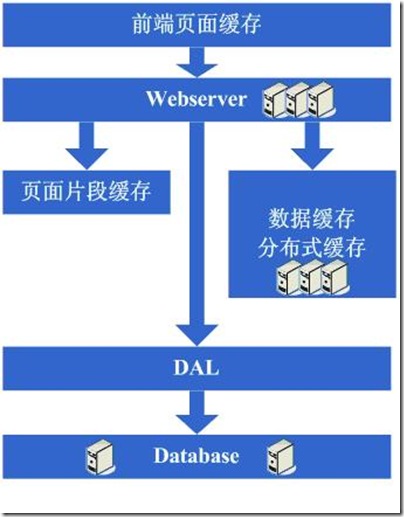

3.7第七步:分表、DAL和分布式缓存

随着系统的不断运行,数据量开始大幅度增长,这个时候发现分库后查询仍然会有些慢,于是按照分库的思想开始做分表的工作,当然,这不可避免的会需要对程序进行一些修改,也许在这个时候就会发现应用自己要关心分库分表的规则等,还是有些复杂的,于是萌生能否增加一个通用的框架来实现分库分表的数据访问,这个在ebay的架构中对应的就是DAL,这个演变的过程相对而言需要花费较长的时间,当然,也有可能这个通用的框架会等到分表做完后才开始做,同时,在这个阶段可能会发现之前的缓存同步方案出现问题,因为数据量太大,导致现在不太可能将缓存存在本地,然后同步的方式,需要采用分布式缓存方案了,于是,又是一通考察和折磨,终于是将大量的数据缓存转移到分布式缓存上了。

图3.7

3.8第八步:增加更多的webserver

在做完分库分表这些工作后,数据库上的压力已经降到比较低了,又开始过着每天看着访问量暴增的幸福生活了,突然有一天,发现系统的访问又开始有变慢的趋势了,这个时候首先查看数据库,压力一切正常,之后查看webserver,发现apache阻塞了很多的请求,而应用服务器对每个请求也是比较快的,看来是请求数太高导致需要排队等待,响应速度变慢,这还好办,一般来说,这个时候也会有些钱了,于是添加一些webserver服务器,在这个添加webserver服务器的过程,有可能会出现几种挑战:

1、Apache的软负载或LVS软负载等无法承担巨大的web访问量(请求连接数、网络流量等)的调度了,这个时候如果经费允许的话,会采取的方案是购买硬件负载,例如F5、Netsclar、Athelon之类的,如经费不允许的话,会采取的方案是将应用从逻辑上做一定的分类,然后分散到不同的软负载集群中;

2、原有的一些状态信息同步、文件共享等方案可能会出现瓶颈,需要进行改进,也许这个时候会根据情况编写符合网站业务需求的分布式文件系统等;

在做完这些工作后,开始进入一个看似完美的无限伸缩的时代,当网站流量增加时,应对的解决方案就是不断的添加webserver。

图3.8

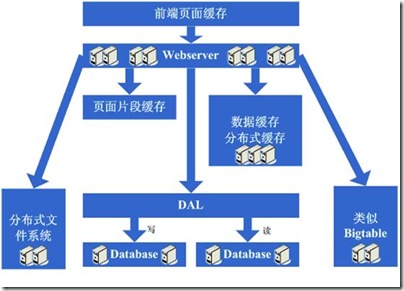

3.9第九步:数据读写分离和廉价存储方案

突然有一天,发现这个完美的时代也要结束了,数据库的噩梦又一次出现在眼前了,由于添加的webserver太多了,导致数据库连接的资源还是不够用,而这个时候又已经分库分表了,开始分析数据库的压力状况,可能会发现数据库的读写比很高,这个时候通常会想到数据读写分离的方案,当然,这个方案要实现并不容易,另外,可能会发现一些数据存储在数据库上有些浪费,或者说过于占用数据库资源,因此在这个阶段可能会形成的架构演变是实现数据读写分离,同时编写一些更为廉价的存储方案,例如BigTable这种。

图3.9

3.10第十步:进入大型分布式应用时代和廉价服务器群梦想时代

经过上面这个漫长而痛苦的过程,终于是再度迎来了完美的时代,不断的增加webserver就可以支撑越来越高的访问量了,对于大型网站而言,人气的重要毋庸置疑,随着人气的越来越高,各种各样的功能需求也开始爆发性的增长,这个时候突然发现,原来部署在webserver上的那个web应用已经非常庞大了,当多个团队都开始对其进行改动时,可真是相当的不方便,复用性也相当糟糕,基本是每个团队都做了或多或少重复的事情,而且部署和维护也是相当的麻烦,因为庞大的应用包在N台机器上复制、启动都需要耗费不少的时间,出问题的时候也不是很好查,另外一个更糟糕的状况是很有可能会出现某个应用上的bug就导致了全站都不可用,还有其他的像调优不好操作(因为机器上部署的应用什么都要做,根本就无法进行针对性的调优)等因素,根据这样的分析,开始痛下决心,将系统根据职责进行拆分,于是一个大型的分布式应用就诞生了,通常,这个步骤需要耗费相当长的时间,因为会碰到很多的挑战:

1、拆成分布式后需要提供一个高性能、稳定的通信框架,并且需要支持多种不同的通信和远程调用方式;

2、将一个庞大的应用拆分需要耗费很长的时间,需要进行业务的整理和系统依赖关系的控制等;

3、如何运维(依赖管理、运行状况管理、错误追踪、调优、监控和报警等)好这个庞大的分布式应用。

经过这一步,差不多系统的架构进入相对稳定的阶段,同时也能开始采用大量的廉价机器来支撑着巨大的访问量和数据量,结合这套架构以及这么多次演变过程吸取的经验来采用其他各种各样的方法来支撑着越来越高的访问量。

图3.10

4.分析

随着平台做大做强,很可能会走向定制操作系统,定制数据库,甚至定制硬件,定制任何可以定制的东西这样一条路。

在服务器、架构、组件等技术选择方面,主要有两个方向:1选择成熟商用。2选择开源+自主研发。下面就这两个方向逐一进行简单分析。

1商用的优缺点

l 商用的优点之一是成熟,稳定,搭建快速。

l 商用的缺点之一是费用高,随着服务器的增加,license的费用上升,成本偏高。

l 商用的产品是通用化的,缺乏定制性,不能满足个性需要。

2开源+自主研发的优缺点

l 源码开放,可控性好,出现问题,可以从底层解决,扩展性好。

l 短期时间、人力投入大,初期见效慢;长期产出大,见效明显。

l 可以在软件和硬件的多个层面不断优化,充分满足个性化需要。

商用和开源+自主研发各有优缺点,各有互补性,要根据使用场景的不同来进行选择,也可以根据需要配合使用。

5.总结

目前大型网站的主流是LAMP(linux+apache+mysql+php),或者是在这基础之上的扩展,例如增加缓存,增加中间件(中间件大多使用java,c,c++或者.NET编写,或者购买成熟的中间件产品,IBM就有很多成熟的中间件产品);又或者替换其中的某些部分,例如前端使用python,ruby,lua这些新近流行的脚本语言,数据存储部分使用nosql或者文件系统。这样的选择有历史原因、费用原因、业务原因,也有在网站发展之后需要满足新的需求而衍生出来解决特定问题的原因。

也有初期使用微软系(windows+.NET+MSSQL)来构建网站的,在后面又根据需要加入其他体系的的电商,例如:京东,当当,凡客等。也有始终采用微软系的网站,国外的微软官网,stackoverflow,还有曾经辉煌的myspace。

其实,现在的发展趋势是:混合体系,而非单一的体系。就是说技术体系不是单一的,也不是固定一成不变的,而是根据业务以及网站的发展,以及技术的发展,选择合适的技术解决适当的问题。

架构的变更不是一件小事,对业务和网站的发展都很重要,不可能几天或者一半个月就变更一下,也不可能有事没事变更一下,应该是在关键的时候,有需要的时候,或者根据计划定期升级。

我觉得有一种方式可以帮助我们进行选择。就是根据我们的目标,或者说预估的业务量,预估的成交量,预估的用户量,划分几个平台发展里程碑,或者是时间段。然后根据平台发展的里程碑来规划技术选型的里程碑。考虑规模的同时,还需要考虑业务的类型,产生的数据的类型,对这些数据的处理需求等因素。

可以先定几个里程碑,这个里程碑的时间,可以根据前面的业务预估来裁定。先根据第一个里程碑要满足的业务需求,来选择当前的技术架构,并且进行存储空间规划。然后针对第一个到第二个里程碑的过度,进行预留设计,保证将来的平稳过渡。或者只是预留扩展的余地,这方面有时候有点难度,不过应该尽量做。

在第二个里程碑之前的1-2个月进行第二个里程碑技术架构的讨论和设计,因为这时候相比原有的第二个里程碑的业务估计可能会有变动,或者技术上有了新的选择,都可以及时考虑到本次的设计中来。以此类推后面的里程碑技术架构变更。

还有就是突发情况,因为总会有一些意料之外的情况发生,有的是业务发展的需要,有的是被动的需要。针对这些突发情况,也会进行架构的升级。

参考文献

全球软件开发大会

QCon是由InfoQ主办的全球顶级技术盛会,每年在伦敦、北京、东京、纽约、圣保罗、杭州、旧金山召开。自2007年3月份首次举办以来,已经 有包括传统制造、金融、电信、互联网、航空航天等领域的近万名架构师、项目经理、团队领导者和高级开发人员参加过QCon大会。

秉承“促进软件开发领域知识与创新的传播”原则,QCon各项议题专为中高端技术人员设计,内容源于实践并面向社区。演讲嘉宾依据各重点和热点话题,分享技术趋势和最佳实践;作为主办方,InfoQ努力为参会者提供良好的学习和交流环境。

1.淘宝

LAMP, .NET, Nginx, windows, linux, Oracle, MySQL, Lua, NoSQL

淘宝网,是一个在线商品数量突破一亿,日均成交额超过两亿元人民币,注册用户接近八千万的大型电子商务网站,是亚洲最大的购物网站。

· 日均 IP [周平均] 134700000

· 日均 PV [周平均] 2559300000

· 日均 IP [月平均] 137280000

· 日均 PV [月平均] 2608320000

· 日均 IP [三月平均] 130620000

· 日均 PV [三月平均] 2481780000

存放淘宝所有的开源项目

目前,国内自主研发的文件系统可谓凤毛麟角。淘宝在这一领域做了有效的探索和实践,Taobao File System(TFS)作为淘宝内部使用的分布式文件系统,针对海量小文件的随机读写访问性能做了特殊优化,承载着淘宝主站所有图片、商品描述等数据存储。

Tair是由淘宝网自主开发的Key/Value结构数据存储系统,在淘宝网有着大规模的应用。您在登录淘宝、查看商品详情页面或者在淘江湖和好友“捣浆糊”的时候,都在直接或间接地和Tair交互。

LinkedIn和淘宝部分功能使用了Node.js

2.腾讯

Php, asp

· 日均 IP [周平均] 222000000

· 日均 PV [周平均] 1776000000

· 日均 IP [月平均] 218640000

· 日均 PV [月平均] 1749120000

· 日均 IP [三月平均] 213930000

· 日均 PV [三月平均] 1711440000

3.又拍网

Erlang, Python, PHP, Redis

3.赶集网

Php, MySQL

4.新浪微博

php

唐福林是新浪微博开放平台资深工程师,目前负责t.cn短链、用户关系、计数器等底层服务。他曾负责过包括新浪邮箱全文搜索在内的多个基于Lucene的 垂直搜索引擎开发,以及新浪爱问和新浪播客的运维,对承载大数据量、高并发的互联网基础设施建设有丰富的经验。他在QCon杭州2011大会的开放平台专 题做了名为《新浪微博开放平台中的Redis实践》的讲座,并和参会者做了热烈的讨论。会后,InfoQ中文站对唐福林做了采访。

新浪微博的注册用户数在3个月内从1.4亿增长到2亿,用户之间的关注,粉丝关系更是要再高出一个数量级,而且读取量还在以更快的速度增长。新浪微 博开放平台的接口中还有很多的数字,这些数字的读写量巨大,而且对一致性,实时性都要求很高。传统的 mysql+memcache 方案在这些场景下越来越力不从心,于是新浪微博开放平台大胆启用了 NoSql 领域的新贵 Redis 。

5.Facebook

LAMP

6.Twitter

Lamp,ruby

Twitter使用的大部分工具都是开源的。其结构是用Rails作前端,C,Scala和Java组成中间的业务层,使用MySQL存储数据。所有的东西都保存在RAM里,而数据库只是用作备份。Rails前端处理展现,缓存组织,DB查询以及同步插入。这一前端主要由几部分客户服务粘合而成,大部分是C写的:MySQL客户端,Memcached客户端,一个JSON端,以及其它。

中间件使用了Memcached,Varnish用于页面缓存,一个用Scala写成的MQ,Kestrel和一个Comet服务器也正在规划之中,该服务器也是用Scala写成,当客户端想要跟踪大量的tweet时它就能派上用场。

Twitter是作为一个“内容管理平台而非消息管理平台”开始的,因此从一开始基于聚合读取的模型改变到现在的所有用户都需要更新最新tweet的消息模型,需要许许多多的优化。这一改动主要在于三个方面:缓存,MQ以及Memcached客户端。

7.eBay

lamp

8.凡客

.net,c,c++

· 日均 IP [周平均] 6990000

· 日均 PV [周平均] 62910000

· 日均 IP [月平均] 7650000

· 日均 PV [月平均] 68850000

· 日均 IP [三月平均] 7983000

· 日均 PV [三月平均] 71847000

9.一号店

Java, jsp, linux, mysql, postgresql, oracle

· 日均 IP [周平均] 2610000

· 日均 PV [周平均] 23490000

· 日均 IP [月平均] 2793000

· 日均 PV [月平均] 25137000

· 日均 IP [三月平均] 2583000

· 日均 PV [三月平均] 20664000

10.银泰网

.net,jsp,java

11.当当

.net,php,jquery

CDN

CDN的全称是Content Delivery Network,即内容分发网络。其基本思路是尽可能避开互联网上有可能影响数据传输速度和稳定性的瓶颈和环节,使内容传输的更快、更稳定。通过在网络各 处放置节点服务器所构成的在现有的互联网基础之上的一层智能虚拟网络,CDN系统能够实时地根据网络流量和各节点的连接、负载状况以及到用户的距离和响应 时间等综合信息将用户的请求重新导向离用户最近的服务节点上。其目的是使用户可就近取得所需内容,解决 Internet网络拥挤的状况,提高用户访问网站的响应速度。

http://baike.baidu.com/view/21895.htm

1、大型网站架构设计图

2、PlentyOfFish 网站架构

http://www.dbanotes.net/arch/plentyoffish_arch.html

超过 3000 万的日点击率

对于动态出站(outbound)的数据进行压缩,这耗费了30%的CPU能力,但节省了带宽资源

负载均衡采用 ServerIron (Conf Refer)(ServerIron 使用简单,而且功能比 NLB 更丰富)

一共三台 SQL Server,一台作为主库,另外两台只读数据库支撑查询,大力气优化 DB

3、YouTube 的架构

http://www.dbanotes.net/opensource/youtube_web_arch.html

相当大的数据流量——每天有10亿次下载以及6,5000次上传

大部分代码都是 Python 开发的

Web 服务器有部分是 Apache, 用 FastCGI 模式。对于视频内容则用 Lighttpd 。(国内的豆瓣用的Lighttpd)

启用了单独的服务器群组来承担视频压力,并且针对 Cache 和 OS 做了部分优化,访问量大的视频放在CDN上,自己的只需承担小部分访问压力

用 MySQL 存储元数据–用户信息、视频信息什么的。

业务层面的分区(在用户名字或者 ID 上做文章,应用程序控制查找机制)

4、Yahoo!社区架构

http://www.dbanotes.net/arch/yahoo_arch.html

5、Amazon 的 Dynamo 架构

http://www.dbanotes.net/techmemo/amazon_dynamo.html

6、财帮子(caibangzi.com)网站架构

http://www.dbanotes.net/arch/caibangzi_web_arch.html

7、说说大型高并发高负载网站的系统架构(更新)

http://www.toplee.com/blog/71.html

from:http://www.cnblogs.com/virusswb/archive/2012/01/10/2318169.html